UAV Technologies

Sensing, control and Swarm.

Pose Estimation (Visual Odometry)

This algorithm aims to estimate the position and orientation of the UAV based computer vision techniques. This information is used to assist GPS system, improving its efficiency and reliability, as well as for the absolute location of the system in the absence of the GPS.

The localization algorithm is based on solving different homographies world-to-frame and frame-to-frame from the tracked and matched by feature points from the images captured by onboard cameras. Feature matching algorithm is based on a combination of SIFT and the FREAK, to increase the performance of feature matching algorithm and reducing the computational cost.

The algorithm has been verified with real flights (both indoor and outdoor), and the results obtained demonstrate the high performance of the developed system, compared to inertial sensors (IMU) and GPS-based systems.

Tracking Obstacle Detection and Avoidance

An obstacle detection algorithm that mimics the human behavior of detecting the state of approaching obstacles, using a single camera has been proposed.

The obstacle detection algorithm is based on estimating the size changes of the detected feature points, combined with the expansion ratios of the convex hull constructed around the detected feature points from the sequence of monocular frames. In addition, the algorithm estimates the 2D obstacle position in the image and combining it with the control system to give the UAV the ability to perform avoidance maneuvers.

Path Planning

The concept of this approach is the implementation of the Simulated Annealing (SA) algorithm in order to create the paths of the UAV. In addition, a solution for tracking the waypoints, in order to deal with the planning of the waypoint following, for the autonomous flights has been proposed. The position and orientation of the UAV are estimated by two methods: the vision-based algorithm and the estimate of incremental movement based on the inertial sensor (IMU) that incorporates the UAV.

The experimental results show the reliability of the proposed algorithm and its applicability in different applications and scenarios.

Autonomous Landing

In this work, autonomous landing system has been designed, allowing the UAV to approach and land on a specific area for landing, this is based on the detection and the tracking of a pattern (helipad) through the images obtained by the onboard cameras in the UAV.

For that, computer vision-based techniques have been implemented. These techniques are based on the well-known OpenCV libraries. The navigation and landing will be performed by sending of real-time navigation commands, based on the information obtained by the analysis of the images and the search for the pattern.

Modifications

- Through minor modifications, same methods are used for tracking moving landing areas.

- Another possible modification, make the UAV able to perform an autonomous motion based on the detection and the following several markers in the path of the UAV.

Flight Control

Fuzzy Control

The objective of this work is to develop a stability and position control system for the UAV based on Fuzzy logic control, using data provided by a visual information that allows the stabilization and the navigation of the UAV.

For this purpose, first, an estimation filter has been designed; in order to reduce possible noise from the sensors. Then, the controller was implemented and has two versions; Stability Control which has as inputs the horizontal velocities (in x and y-axes), and provided by the IMU, and angles (pitch, roll, yaw) obtained by the gyro. And Position Control, maintaining the data obtained from the IMU, and the gyroscope, in addition to the pose estimated by the visual odometry algorithm; in order to allow the UAV to navigate with more precision.

Semi-automatic Reactive Control

The proposed semi-automatic control is based on the force feedback, which is obtained by directly modify the reference velocity at higher levels. Based on the tunable parameters of the problem, a repulsive velocity field is created and divided into distinct zones, that express different levels of risk in terms of collision and depend on the relative position and velocity with respect to the object.

In order to obtain the distance to the detected objects, the 3D information received from the depth sensor (Kinect V2), taking the advantages of its ability to obtain data in the outdoor environment. Therefore, the actual depth of each pixel in the image is known. So, the relative position of the object from the UAV can be computed. This color-depth correlation is performed using the camera intrinsic and extrinsic parameters.

Communication and Cooperation

Communication and cooperation among a team of unmanned vehicles are essential and crucial for multiple reasons. In case of long duration tasks, or vehicle failure, it is unlikely that a single vehicle is enough to complete the task. Moreover, many civil applications tasks require multiple vehicles, in order to achieve the task in an efficient, rapid and stable manner. For the communication, all vehicles must share a common network. Furthermore, a decentralized multi-master approach is preferred, so the group of vehicles can continue operating if any member fails.

For the cooperation, the system is formulated as Multi-Robot Task Allocation (MRTA) problem. This takes different inputs that describe the vehicle status. The allocation process depends on the vehicle status and all other vehicles in the system.

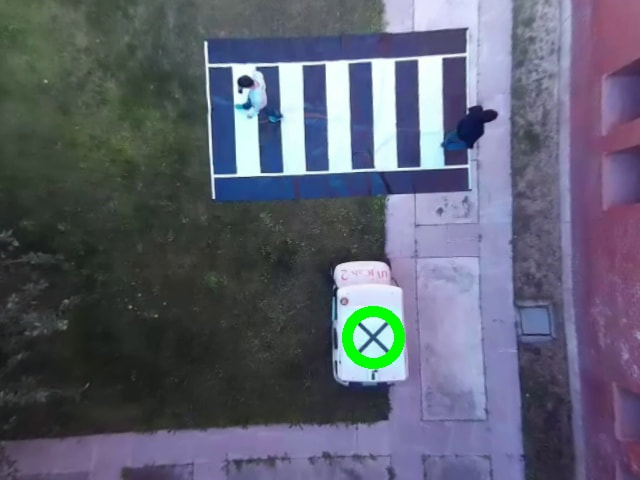

UAV-UGV Cooperation

It has been developed a heterogeneous cooperation approach to deal with the problem of detecting pedestrians through the UAV and send the information to the ground vehicles. In this approach, an Unmanned Aerial Vehicle (UAV) is used to assist an autonomous car in the detection of pedestrians, when the visibility from the perspective of the car is restricted. For this purpose, the UAV is equipped with an onboard monocular camera and an embedded computer; to process the visual information and determines both the position of the car and the pedestrians in the scene. The information extracted from the vision algorithms is shared with the other vehicles to be incorporated in its model of the environment; in order to perform safer navigation tasks.

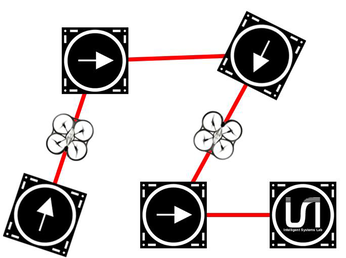

UAV Swarm

Among the lines of vehicles cooperation, and within this field, AMPL presents different works, which focuses on the study of algorithms that allow dynamic planning of trajectories considering the different characteristics and restrictions of the drones that are part of the swarm. The goal of these algorithms is to enable dynamic, coordinated and safe autonomous navigation considering all possible UAV-UAV and UAV-environment interactions.